This post is part of a series, describing the assessments used to develop the Institute for Research Design in Librarianship (IRDL).

In our 2012 article, we reported on the results of a survey we administered to get a sense of the attitudes, involvement, and perceptions academic librarians had about conducting their own original research. A component of that survey included a multi-part question about confidence in the steps of a research project. Question 10 of the survey looked like this:

Q10. On a scale of 1 to 5, with 1 being “Not at all confident” and 5 being “Very confident,” how would you rate your confidence in performing the following steps in a research project?

[Likert scale 1–5 was presented for each entry: 1 Not at all confident; 2 Slightly confident; 3 Moderately confident; 4 Confident; 5 Very confident]

-

- Turning your topic into a question that can be tested

- Designing a project to test your question

- Performing a literature review

- Identifying research partners, if needed

- Gathering data

- Analyzing data

- Reporting results in written format

- Reporting results verbally

- Determining appropriate format for disseminating results (poster/presentation/article)

- Identifying appropriate places to disseminate results (publication/conference)

Most of the respondents rated themselves at points 3 or 4 of this 5-point scale. We understood that this question was a rather blunt measurement and that the steps of a research project were quite more nuanced than as presented in the survey. It did give us, however, enough information about how librarians were assessing their own confidence to know how to move forward in the development of a curriculum for the IRDL program.

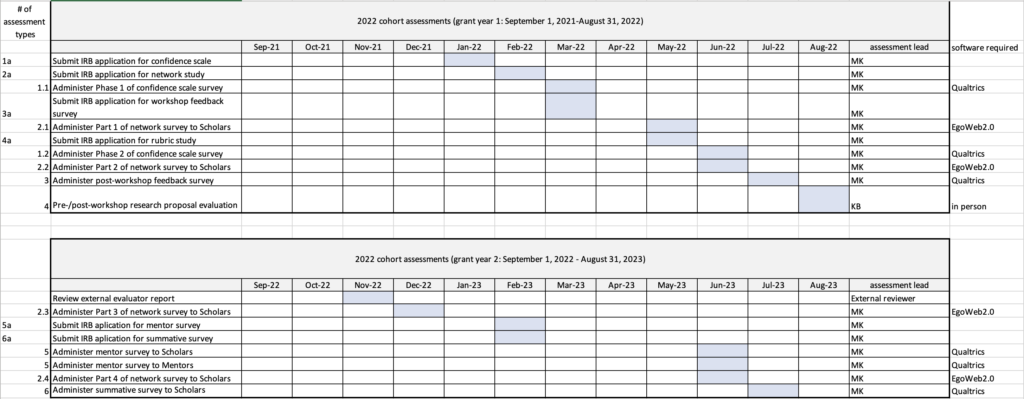

We further explored the design of the 10-item scale to measure confidence in the steps of a research project by conducting an exploratory factor analysis. The results of the analysis led us to remove the item, “Identifying research partners, if needed,” and change the wording of the item about performing a literature review. The resulting nine items clustered into three phases: planning, data, and reporting.

Knowing that the nine-item scale lacked the specificity of measurement we desired, we expanded it to 38 items within the three phases. We retained the 5-point Likert scale as measurement. The development of the scale is described in our 2017 article, “The Development and Use of a Research Self-efficacy Scale to Assess the Effectiveness of a Research Training Program for Academic Librarians.” We have used the 38-item scale in subsequent research (Kennedy & Brancolini, 2018) as well as an assessment tool in IRDL. For the purposes of IRDL assessment, in 2022 we condensed the survey to include only 34 items and moved away from the 5-point Likert scale to a 0-100 slider scale, with guiding language of “cannot do at all” near the zero end of the scale, “moderately can do” in the middle, and “highly certain can do” near the 100 end of the scale.

Linked here is the version of the scale in use from 2022-2024.

An example of how the nine-point scale was expanded from the original: The first item on the ten-point scale was “Turning your topic into a question that can be tested.” In the 38-point scale, that item was expanded into three: “turning your topic into a question”; “constructing a question that is reasonable in scope,” and “determining if your research topic makes a contribution to the field.” Expanding just this one item enabled us to measure some practical nuance in the concept of the item.

How the confidence scale was used for IRDL

Research confidence has been shown to be a predictor of research productivity. There is also some evidence that it is a mediating factor between the research training environment and research productivity. To explore the connection between research training and research self-efficacy, we used the scale on the cohorts of IRDL. The scale was delivered to the Scholars as a pre-test (Time 1), in the spring, before their Summer Research Workshop training, and again immediately after completing the training, as a post-test (Time 2).

LMU’s IRB reviewed the protocol ahead of Time 1. At Time 1, I sent an email to all participants of the incoming cohort, to ask them to complete the survey (delivered on the Qualtrics platform), within a two-week time frame. Time 1 was scheduled in the spring, after acceptance into the program but ahead of receiving any program materials (books or access to online course materials). We released the second survey (Time 2) via email on the last morning of the summer research workshop and gave the participants time to complete it that day.

For our purposes, the survey was not anonymized, so that we could compare the differences for each person between Time 1 and Time 2. We calculated average confidence pre-workshop and post-workshop at the group level, change pre/post on each measure, and a change score for each participant. The confidence scores (measured using a t-test) of all participants were statistically higher after the Summer Research Workshop, with a large effect size for each measure. Increasing overall research self-efficacy is intended to increase the probability that the IRDL Scholars will complete their research projects and become accomplished, productive librarian-researchers. Our case study use of the scale confirms that a measurement of research self-efficacy can be a useful tool in assessing the effectiveness of research training for the purpose of improving that training.

My reflection on the use of this tool for assessing the program

This survey took about 5 minutes to complete, so it was convenient for the workshop participants to participate. Capturing their self-assessments before the workshop and immediately after was impactful for us as the developers of this model of continuing education training, to know if the content in the workshop was giving the participants the confidence boost we were hoping to see. It was informative for us to know that the curricular design for the various components of the workshop was providing such a strong increase in confidence for the participants.

The cost of this assessment tool

Because LMU has an institutional subscription to Qualtrics, no grant funds needed to be used for the assessment. No specialized software was needed for the analysis of data.

Kennedy, Marie R. and Kristine R. Brancolini. 2012. “Academic Librarian Research: A Survey of Attitudes, Involvement, and Perceived Capabilities.” College & Research Libraries 73(5): 431-448. https://doi.org/10.5860/crl-276

Brancolini, Kristine R., and Marie R. Kennedy. 2017. “The Development and Use of a Research Self-efficacy Scale to Assess the Effectiveness of a Research Training Program for Academic Librarians.” Library and Information Research 41(124). https://doi.org/10.29173/lirg760

Kennedy, Marie R., and Kristine R. Brancolini. 2018. “Academic Librarian Research: An Update to a Survey of Attitudes, Involvement, and Perceived Capabilities.” College & Research Libraries 79(6). https://doi.org/10.5860/crl.79.6.822

Earlier posts in this series:

Introduction post